It may be surprising when a new InnoDB Cluster is set up, and despite not being in production yet and completely idle, it manifests a significant amount of writes visible in growing binary logs. This effect became much more spectacular after MySQL version 8.4. In this write-up, I will explain why it happens and how to address […]

It may be surprising when a new InnoDB Cluster is set up, and despite not being in production yet and completely idle, it manifests a significant amount of writes visible in growing binary logs. This effect became much more spectacular after MySQL version 8.4. In this write-up, I will explain why it happens and how to address […]

21

2025

MySQL Router 8.4: How to Deal with Metadata Updates Overhead

14

2024

Effective Strategies for Recovering MySQL Group Replication From Failures

Group replication is a fault-tolerant/highly available replication topology that ensures if the primary node goes down, one of the other candidates or secondary members takes over so write and read operations can continue without any interruptions. However, there are some scenarios where, due to outages, network partitions, or database crashes, the group membership could be broken, or we end […]

Group replication is a fault-tolerant/highly available replication topology that ensures if the primary node goes down, one of the other candidates or secondary members takes over so write and read operations can continue without any interruptions. However, there are some scenarios where, due to outages, network partitions, or database crashes, the group membership could be broken, or we end […]

24

2024

Understanding Basic Flow Control Activity in MySQL Group Replication: Part One

Flow control is not a new term, and we have already heard it a lot of times in Percona XtraDB Cluster/Galera-based environments. In very simple terms, it means the cluster node can’t keep up with the cluster write pace. The write rate is too high, or the nodes are oversaturated. Flow control helps avoid excessive […]

Flow control is not a new term, and we have already heard it a lot of times in Percona XtraDB Cluster/Galera-based environments. In very simple terms, it means the cluster node can’t keep up with the cluster write pace. The write rate is too high, or the nodes are oversaturated. Flow control helps avoid excessive […]

09

2024

How to Use Group Replication with Haproxy

When working with group replication, MySQL router would be the obvious choice for the connection layer. It is tightly coupled with the rest of the technologies since it is part of the InnoDB cluster stack.The problem is that except for simple workloads, MySQL router’s performance is still not on par with other proxies like Haproxy […]

When working with group replication, MySQL router would be the obvious choice for the connection layer. It is tightly coupled with the rest of the technologies since it is part of the InnoDB cluster stack.The problem is that except for simple workloads, MySQL router’s performance is still not on par with other proxies like Haproxy […]

11

2022

Online DDL With Group Replication in MySQL 8.0.27

In April 2021, I wrote an article about Online DDL and Group Replication. At that time we were dealing with MySQL 8.0.23 and also opened a bug report which did not have the right answer to the case presented.

In April 2021, I wrote an article about Online DDL and Group Replication. At that time we were dealing with MySQL 8.0.23 and also opened a bug report which did not have the right answer to the case presented.

Anyhow, in that article I have shown how an online DDL was de facto locking the whole cluster for a very long time even when using the consistency level set to EVENTUAL.

This article is to give justice to the work done by the MySQL/Oracle engineers to correct that annoying inconvenience.

Before going ahead, let us remember how an Online DDL was propagated in a group replication cluster, and identify the differences with what happens now, all with the consistency level set to EVENTUAL (see).

In MySQL 8.0.23 we were having:

|

|

|

While in MySQL 8.0.27 we have:

|

|

|

As you can see from the images we have three different phases. Phase one is the same between version 8.0.23 and version 8.0.27.

Phases two and three, instead, are quite different. In MySQL 8.0.23 after the DDL is applied on the Primary, it is propagated to the other nodes, but a metalock was also acquired and the control was NOT returned. The result was that not only the session executing the DDL was kept on hold, but also all the other sessions performing modifications.

Only when the operation was over on all secondaries, the DDL was pushed to Binlog and disseminated for Asynchronous replication, lock raised and operation can restart.

Instead, in MySQL 8.0.27, once the operation is over on the primary the DDL is pushed to binlog, disseminated to the secondaries and control returned. The result is that the write operations on primary have no interruption whatsoever and the DDL is distributed to secondary and Asynchronous replication at the same time.

This is a fantastic improvement, available only with consistency level EVENTUAL, but still, fantastic.

Let’s See Some Numbers

To test the operation, I have used the same approach used in the previous tests in the article mentioned above.

Connection 1:

ALTER TABLE windmills_test ADD INDEX idx_1 (`uuid`,`active`), ALGORITHM=INPLACE, LOCK=NONE;

ALTER TABLE windmills_test drop INDEX idx_1, ALGORITHM=INPLACE;

Connection 2:

while [ 1 = 1 ];do da=$(date +'%s.%3N');/opt/mysql_templates/mysql-8P/bin/mysql --defaults-file=./my.cnf -uroot -D windmills_large -e "insert into windmills_test select null,uuid,millid,kwatts_s,date,location,active,time,strrecordtype from windmill7 limit 1;" -e "select count(*) from windmills_large.windmills_test;" > /dev/null;db=$(date +'%s.%3N'); echo "$(echo "($db - $da)"|bc)";sleep 1;done

Connection 3:

while [ 1 = 1 ];do da=$(date +'%s.%3N');/opt/mysql_templates/mysql-8P/bin/mysql --defaults-file=./my.cnf -uroot -D windmills_large -e "insert into windmill8 select null,uuid,millid,kwatts_s,date,location,active,time,strrecordtype from windmill7 limit 1;" -e "select count(*) from windmills_large.windmills_test;" > /dev/null;db=$(date +'%s.%3N'); echo "$(echo "($db - $da)"|bc)";sleep 1;done

Connections 4-5:

while [ 1 = 1 ];do echo "$(date +'%T.%3N')";/opt/mysql_templates/mysql-8P/bin/mysql --defaults-file=./my.cnf -uroot -D windmills_large -e "show full processlist;"|egrep -i -e "(windmills_test|windmills_large)"|grep -i -v localhost;sleep 1;done

Modifying a table with ~5 million rows:

node1-DC1 (root@localhost) [windmills_large]>select count(*) from windmills_test; +----------+ | count(*) | +----------+ | 5002909 | +----------+

The numbers below represent the time second/milliseconds taken by the operation to complete. While I was also catching the state of the ALTER on the other node I am not reporting it here given it is not relevant.

EVENTUAL (on the primary only) ------------------- Node 1 same table: .184 .186 <--- no locking during alter on the same node .184 <snip> .184 .217 <--- moment of commit .186 .186 .186 .185 Node 1 another table : .189 .198 <--- no locking during alter on the same node .188 <snip> .191 .211 <--- moment of commit .194

As you can see there is just a very small delay at the moment of commit, but other impacts.

Now if we compare this with the recent tests I have done for Percona XtraDB Cluster (PXC) Non-Blocking operation (see A Look Into Percona XtraDB Cluster Non-Blocking Operation for Online Schema Upgrade) with the same number of rows and same kind of table/data:

| Action | Group Replication | PXC (NBO) |

|---|---|---|

| Time on hold for insert for altering table | ~ 0.217 sec | ~ 120 sec |

| Time on hold for insert for another table | ~ 0.211 sec | ~ 25 sec |

However, yes there is a however, PXC was maintaining consistency between the different nodes during the DDL execution, while MySQL 8.0.27 with Group Replication was postponing consistency on the secondaries, thus Primary and Secondary were not in sync until full DDL finalization on the secondaries.

Conclusions

MySQL 8.0.27 comes with this nice fix that significantly reduces the impact of an online DDL operation on a busy server. But we can still observe a significant misalignment of the data between the nodes when a DDL is executing.

On the other hand, PXC with NBO is a bit more “expensive” in time, but nodes remain aligned all the time.

In the end, is what is more important for you to choose one or the other solution, consistency vs. operational impact.

Great MySQL to all.

19

2021

MySQL Group Replication: Conversion of GR Member to Async Replica (and Back) In the Same Cluster

MySQL Group Replication is a plugin that helps to implement highly available fault-tolerant replication topologies. In this blog, I am going to explain the complete steps involved in the below two topics.

MySQL Group Replication is a plugin that helps to implement highly available fault-tolerant replication topologies. In this blog, I am going to explain the complete steps involved in the below two topics.

- How to convert the group replication member to an asynchronous replica

- How to convert the asynchronous replica to a group replication member

Why Am I Converting From GR Back to Old Async?

Recently I had a requirement from one of our customers running 5 node GR clusters. Once a month they are doing the bulk read job for generating the business reports. When they are doing the job, it affects the overall cluster performance because of the flow control issues. The node which is executing the read job is overloaded and delays the certification and writes apply process. The read job queries can’t be split across the cluster. So, they don’t want that particular node as a part of the cluster during the report generation. So, I recommended this approach. The overall job will take 3-4 hours. During that particular time, the topology will be 4 node clusters and one asynchronous replica. Once the job is completed, the async replica node will be again joined to the GR cluster.

For testing this, I have installed and configured the group replication cluster with 5 nodes ( gr1,gr2,gr3,gr4,gr5 ). The cluster is operating with a single primary mode.

mysql> select member_host,member_state,member_role,member_version from performance_schema.replication_group_members; +-------------+--------------+-------------+----------------+ | member_host | member_state | member_role | member_version | +-------------+--------------+-------------+----------------+ | gr5 | ONLINE | SECONDARY | 8.0.22 | | gr4 | ONLINE | SECONDARY | 8.0.22 | | gr3 | ONLINE | SECONDARY | 8.0.22 | | gr2 | ONLINE | SECONDARY | 8.0.22 | | gr1 | ONLINE | PRIMARY | 8.0.22 | +-------------+--------------+-------------+----------------+ 5 rows in set (0.00 sec)

Using Percona Server for MySQL 8.0.22.

mysql> select @@version, @@version_comment\G *************************** 1. row *************************** @@version: 8.0.22-13 @@version_comment: Percona Server (GPL), Release 13, Revision 6f7822f 1 row in set (0.01 sec)

How to Convert the Group Replication Member to Asynchronous Replica?

To explain this topic,

- I am going to convert the group replication member “gr5” to an asynchronous replica.

- The GR member “gr4” will be the source for “gr5”.

Current status:

+-------------+--------------+-------------+----------------+ | member_host | member_state | member_role | member_version | +-------------+--------------+-------------+----------------+ | gr5 | ONLINE | SECONDARY | 8.0.22 | | gr4 | ONLINE | SECONDARY | 8.0.22 | | gr3 | ONLINE | SECONDARY | 8.0.22 | | gr2 | ONLINE | SECONDARY | 8.0.22 | | gr1 | ONLINE | PRIMARY | 8.0.22 | +-------------+--------------+-------------+----------------+

Step 1:

— Take out the node “gr5” from the cluster.

gr5 > stop group_replication; Query OK, 0 rows affected (4.64 sec)

Current cluster status:

+-------------+--------------+-------------+----------------+ | member_host | member_state | member_role | member_version | +-------------+--------------+-------------+----------------+ | gr4 | ONLINE | SECONDARY | 8.0.22 | | gr3 | ONLINE | SECONDARY | 8.0.22 | | gr2 | ONLINE | SECONDARY | 8.0.22 | | gr1 | ONLINE | PRIMARY | 8.0.22 | +-------------+--------------+-------------+----------------+

Current “gr5” status:

+-------------+--------------+-------------+----------------+ | member_host | member_state | member_role | member_version | +-------------+--------------+-------------+----------------+ | gr5 | OFFLINE | | | +-------------+--------------+-------------+----------------+ 1 row in set (0.00 sec)

Step 2:

Update the connection parameters to not allow communication with other Cluster nodes.

gr5 > select @@group_replication_group_seeds, @@group_replication_ip_whitelist\G *************************** 1. row *************************** @@group_replication_group_seeds: 172.28.128.23:33061,172.28.128.22:33061,172.28.128.21:33061,172.28.128.20:33061,172.28.128.19:33061 @@group_replication_ip_whitelist: 172.28.128.23,172.28.128.22,172.28.128.21,172.28.128.20,172.28.128.19 1 row in set (0.00 sec) gr5 > set global group_replication_group_seeds=''; Query OK, 0 rows affected (0.00 sec) gr5 > set global group_replication_ip_whitelist=''; Query OK, 0 rows affected, 1 warning (0.00 sec) gr5 > select @@group_replication_group_seeds, @@group_replication_ip_whitelist; +---------------------------------+----------------------------------+ | @@group_replication_group_seeds | @@group_replication_ip_whitelist | +---------------------------------+----------------------------------+ | | | +---------------------------------+----------------------------------+ 1 row in set (0.00 sec)

Step 3:

Remove the group replication channel configurations and the respective physical files. During the group replication configuration, it will create two channels and the respective files (applier/recovery files).

gr5 > select Channel_name from mysql.slave_master_info; +----------------------------+ | Channel_name | +----------------------------+ | group_replication_applier | | group_replication_recovery | +----------------------------+ 2 rows in set (0.00 sec) [root@gr5 mysql]# ls -lrth | grep -i replication -rw-r-----. 1 mysql mysql 225 Apr 12 19:18 gr5-relay-bin-group_replication_applier.000001 -rw-r-----. 1 mysql mysql 98 Apr 12 19:18 gr5-relay-bin-group_replication_applier.index -rw-r-----. 1 mysql mysql 226 Apr 12 19:18 gr5-relay-bin-group_replication_recovery.000001 -rw-r-----. 1 mysql mysql 273 Apr 12 19:18 gr5-relay-bin-group_replication_recovery.000002 -rw-r-----. 1 mysql mysql 100 Apr 12 19:18 gr5-relay-bin-group_replication_recovery.index -rw-r-----. 1 mysql mysql 660 Apr 12 19:18 gr5-relay-bin-group_replication_applier.000002

We can remove them by resetting the replica status.

gr5 > reset replica all; Query OK, 0 rows affected (0.02 sec) gr5 > select Channel_name from mysql.slave_master_info; Empty set (0.00 sec) [root@gr5 mysql]# ls -lrth | grep -i replication [root@gr5 mysql]#

Step 4:

Configure asynchronous replication. To configure, we don’t need to manually update the binlog/gtid positions. Group replication will run based on the GTID. The node was already configured as a member in the same group so it should already have the GTID entries.

gr5 > select @@gtid_executed, @@gtid_purged\G *************************** 1. row *************************** @@gtid_executed: ae2434f6-2be4-4d15-a5dc-fd54919b79b0:1-8, b93e0429-989d-11eb-ad7b-5254004d77d3:1 @@gtid_purged: ae2434f6-2be4-4d15-a5dc-fd54919b79b0:1-2, b93e0429-989d-11eb-ad7b-5254004d77d3:1 1 row in set (0.00 sec)

Just need to run the CHANGE MASTER command.

gr5 > change master to master_user='gr_repl',master_password='Repl@321',master_host='gr4',master_auto_position=1; Query OK, 0 rows affected, 2 warnings (0.05 sec) gr5 > start replica; Query OK, 0 rows affected (0.02 sec) gr5 > pager grep -i 'Master_Host\|Slave_IO_Running\|Slave_SQL_Running\|Seconds_Behind_Master' PAGER set to 'grep -i 'Master_Host\|Slave_IO_Running\|Slave_SQL_Running\|Seconds_Behind_Master'' gr5 > show slave status\G Master_Host: gr4 Slave_IO_Running: Yes Slave_SQL_Running: Yes Seconds_Behind_Master: 0 Slave_SQL_Running_State: Slave has read all relay log; waiting for more updates 1 row in set, 1 warning (0.00 sec)

So, finally, the current topology is:

- We have 4 node group replication clusters ( gr1, gr2, gr3, gr4 ).

- The node “gr5” is configured as an async replica under the “gr4”.

How to Convert the Async Replica to Group Replication Member?

To explain this topic:

- I am going to break the asynchronous replication on “gr5”.

- Then, I will join the node “gr5” to the group replication cluster.

Step 1:

Break replication on “gr5” and reset the replica.

gr5 > stop replica; Query OK, 0 rows affected (0.00 sec) gr5 > reset replica all; Query OK, 0 rows affected (0.00 sec) gr5 > show replica status\G Empty set (0.00 sec)

Step 2:

Configure the connection parameters to join to the cluster.

gr5 > select @@group_replication_group_seeds, @@group_replication_ip_whitelist; +---------------------------------+----------------------------------+ | @@group_replication_group_seeds | @@group_replication_ip_whitelist | +---------------------------------+----------------------------------+ | | | +---------------------------------+----------------------------------+ 1 row in set (0.00 sec) gr5 > set global group_replication_group_seeds='172.28.128.23:33061,172.28.128.22:33061,172.28.128.21:33061,172.28.128.20:33061,172.28.128.19:33061'; Query OK, 0 rows affected (0.00 sec) gr5 > set global group_replication_ip_whitelist='172.28.128.23,172.28.128.22,172.28.128.21,172.28.128.20,172.28.128.19'; Query OK, 0 rows affected, 1 warning (0.00 sec) gr5 > select @@group_replication_group_seeds, @@group_replication_ip_whitelist\G *************************** 1. row *************************** @@group_replication_group_seeds: 172.28.128.23:33061,172.28.128.22:33061,172.28.128.21:33061,172.28.128.20:33061,172.28.128.19:33061 @@group_replication_ip_whitelist: 172.28.128.23,172.28.128.22,172.28.128.21,172.28.128.20,172.28.128.19 1 row in set (0.00 sec)

Step 3:

Configure the channel for “group_replication_recovery”.

gr5 > change master to master_user='gr_repl',master_password='Repl@321' for channel 'group_replication_recovery'; Query OK, 0 rows affected, 2 warnings (0.02 sec)

Note: No need to configure the GTID parameters before starting the group replication service, because the node was already configured as an async replica in the same group. So, when starting the group replication service, it will automatically start with the last GTID position executed by async replication and start to sync the rest of the data.

Step 4:

Start the group replication service

gr5 > start group_replication; Query OK, 0 rows affected, 1 warning (3.02 sec)

Final status:

mysql> select member_host,member_state,member_role,member_version from performance_schema.replication_group_members; +-------------+--------------+-------------+----------------+ | member_host | member_state | member_role | member_version | +-------------+--------------+-------------+----------------+ | gr5 | ONLINE | SECONDARY | 8.0.22 | | gr4 | ONLINE | SECONDARY | 8.0.22 | | gr3 | ONLINE | SECONDARY | 8.0.22 | | gr2 | ONLINE | SECONDARY | 8.0.22 | | gr1 | ONLINE | PRIMARY | 8.0.22 | +-------------+--------------+-------------+----------------+ 5 rows in set (0.00 sec)

I hope this blog post will be helpful to someone, who is learning or working with MySQL Group replication.

Cheers!

05

2018

How to Quickly Add a Node to an InnoDB Cluster or Group Replication

In this blog, we’ll look at how to quickly add a node to an InnoDB Cluster or Group Replication using Percona XtraBackup.

Adding nodes to a Group Replication cluster can be easy (documented here), but it only works if the existing nodes have retained all the binary logs since the creation of the cluster. Obviously, this is possible if you create a new cluster from scratch. The nodes rotate old logs after some time, however. Technically, if the

gtid_purged

set is non-empty, it means you will need another method to add a new node to a cluster. You also need a different method if data becomes inconsistent across cluster nodes for any reason. For example, you might hit something similar to this bug, or fall prey to human error.

Hot Backup to the Rescue

The quick and simple method I’ll present here requires the Percona XtraBackup tool to be installed, as well as some additional small tools for convenience. I tested my example on Centos 7, but it works similarly on other Linux distributions. First of all, you will need the Percona repository installed:

# yum install http://www.percona.com/downloads/percona-release/redhat/0.1-6/percona-release-0.1-6.noarch.rpm -y -q

Then, install Percona XtraBackup and the additional tools. You might need to enable the EPEL repo for the additional tools and the experimental Percona repo for XtraBackup 8.0 that works with MySQL 8.0. (Note: XtraBackup 8.0 is still not GA when writing this article, and we do NOT recommend or advise that you install XtraBackup 8.0 into a production environment until it is GA). For MySQL 5.7, Xtrabackup 2.4 from the regular repo is what you are looking for:

# grep -A3 percona-experimental-\$basearch /etc/yum.repos.d/percona-release.repo [percona-experimental-$basearch] name = Percona-Experimental YUM repository - $basearch baseurl = http://repo.percona.com/experimental/$releasever/RPMS/$basearch enabled = 1

# yum install pv pigz nmap-ncat percona-xtrabackup-80 -q ============================================================================================================================================== Package Arch Version Repository Size ============================================================================================================================================== Installing: nmap-ncat x86_64 2:6.40-13.el7 base 205 k percona-xtrabackup-80 x86_64 8.0.1-2.alpha2.el7 percona-experimental-x86_64 13 M pigz x86_64 2.3.4-1.el7 epel 81 k pv x86_64 1.4.6-1.el7 epel 47 k Installing for dependencies: perl-DBD-MySQL x86_64 4.023-6.el7 base 140 k Transaction Summary ============================================================================================================================================== Install 4 Packages (+1 Dependent package) Is this ok [y/d/N]: y #

You need to do it on both the source and destination nodes. Now, my existing cluster node (I will call it a donor) – gr01 looks like this:

gr01 > select * from performance_schema.replication_group_members\G

*************************** 1. row ***************************

CHANNEL_NAME: group_replication_applier

MEMBER_ID: 76df8268-c95e-11e8-b55d-525400cae48b

MEMBER_HOST: gr01

MEMBER_PORT: 3306

MEMBER_STATE: ONLINE

MEMBER_ROLE: PRIMARY

MEMBER_VERSION: 8.0.13

1 row in set (0.00 sec)

gr01 > show global variables like 'gtid%';

+----------------------------------+-----------------------------------------------+

| Variable_name | Value |

+----------------------------------+-----------------------------------------------+

| gtid_executed | aaaaaaaa-aaaa-aaaa-aaaa-aaaaaaaaaaaa:1-302662 |

| gtid_executed_compression_period | 1000 |

| gtid_mode | ON |

| gtid_owned | |

| gtid_purged | aaaaaaaa-aaaa-aaaa-aaaa-aaaaaaaaaaaa:1-295538 |

+----------------------------------+-----------------------------------------------+

5 rows in set (0.01 sec)

The new node candidate (I will call it a joiner) – gr02, has no data but the same MySQL version installed. It also has the required settings in place, like the existing node address in group_replication_group_seeds, etc. The next step is to stop the MySQL service on the joiner (if already running), and wipe out it’s datadir:

[root@gr02 ~]# rm -fr /var/lib/mysql/*

and start the “listener” process, that waits to receive the data snapshot (remember to open the TCP port if you have a firewall):

[root@gr02 ~]# nc -l -p 4444 |pv| unpigz -c | xbstream -x -C /var/lib/mysql

Then, start the backup job on the donor:

[root@gr01 ~]# xtrabackup --user=root --password=*** --backup --parallel=4 --stream=xbstream --target-dir=./ 2> backup.log |pv|pigz -c --fast| nc -w 2 192.168.56.98 4444 240MiB 0:00:02 [81.4MiB/s] [ <=>

On the joiner side, we will see:

[root@gr02 ~]# nc -l -p 4444 |pv| unpigz -c | xbstream -x -C /var/lib/mysql 21.2MiB 0:03:30 [ 103kiB/s] [ <=> ] [root@gr02 ~]# du -hs /var/lib/mysql 241M /var/lib/mysql

BTW, if you noticed the difference in transfer rate between the two, please note that on the donor side I put

|pv|

before the compressor while in the joiner before decompressor. This way, I can monitor the compression ratio at the same time!

The next step will be to prepare the backup on joiner:

[root@gr02 ~]# xtrabackup --use-memory=1G --prepare --target-dir=/var/lib/mysql 2>prepare.log [root@gr02 ~]# tail -1 prepare.log 181019 19:18:56 completed OK!

and fix the files ownership:

[root@gr02 ~]# chown -R mysql:mysql /var/lib/mysql

Now we should verify the GTID position information and restart the joiner (I have the

group_replication_start_on_boot=off

in my.cnf):

[root@gr02 ~]# cat /var/lib/mysql/xtrabackup_binlog_info binlog.000023 893 aaaaaaaa-aaaa-aaaa-aaaa-aaaaaaaaaaaa:1-302662 [root@gr02 ~]# systemctl restart mysqld

Now, let’s check if the position reported by the node is consistent with the above:

gr02 > select @@GLOBAL.gtid_executed; +-----------------------------------------------+ | @@GLOBAL.gtid_executed | +-----------------------------------------------+ | aaaaaaaa-aaaa-aaaa-aaaa-aaaaaaaaaaaa:1-302660 | +-----------------------------------------------+ 1 row in set (0.00 sec)

No, it is not. We have to correct it:

gr02 > reset master; set global gtid_purged="aaaaaaaa-aaaa-aaaa-aaaa-aaaaaaaaaaaa:1-302662"; Query OK, 0 rows affected (0.05 sec) Query OK, 0 rows affected (0.00 sec)

Finally, start the replication:

gr02 > START GROUP_REPLICATION; Query OK, 0 rows affected (3.91 sec)

Let’s check the cluster status again:

gr01 > select * from performance_schema.replication_group_members\G

*************************** 1. row ***************************

CHANNEL_NAME: group_replication_applier

MEMBER_ID: 76df8268-c95e-11e8-b55d-525400cae48b

MEMBER_HOST: gr01

MEMBER_PORT: 3306

MEMBER_STATE: ONLINE

MEMBER_ROLE: PRIMARY

MEMBER_VERSION: 8.0.13

*************************** 2. row ***************************

CHANNEL_NAME: group_replication_applier

MEMBER_ID: a60a4124-d3d4-11e8-8ef2-525400cae48b

MEMBER_HOST: gr02

MEMBER_PORT: 3306

MEMBER_STATE: ONLINE

MEMBER_ROLE: SECONDARY

MEMBER_VERSION: 8.0.13

2 rows in set (0.00 sec)

gr01 > select * from performance_schema.replication_group_member_stats\G

*************************** 1. row ***************************

CHANNEL_NAME: group_replication_applier

VIEW_ID: 15399708149765074:4

MEMBER_ID: 76df8268-c95e-11e8-b55d-525400cae48b

COUNT_TRANSACTIONS_IN_QUEUE: 0

COUNT_TRANSACTIONS_CHECKED: 3

COUNT_CONFLICTS_DETECTED: 0

COUNT_TRANSACTIONS_ROWS_VALIDATING: 0

TRANSACTIONS_COMMITTED_ALL_MEMBERS: aaaaaaaa-aaaa-aaaa-aaaa-aaaaaaaaaaaa:1-302666

LAST_CONFLICT_FREE_TRANSACTION: aaaaaaaa-aaaa-aaaa-aaaa-aaaaaaaaaaaa:302665

COUNT_TRANSACTIONS_REMOTE_IN_APPLIER_QUEUE: 0

COUNT_TRANSACTIONS_REMOTE_APPLIED: 2

COUNT_TRANSACTIONS_LOCAL_PROPOSED: 3

COUNT_TRANSACTIONS_LOCAL_ROLLBACK: 0

*************************** 2. row ***************************

CHANNEL_NAME: group_replication_applier

VIEW_ID: 15399708149765074:4

MEMBER_ID: a60a4124-d3d4-11e8-8ef2-525400cae48b

COUNT_TRANSACTIONS_IN_QUEUE: 0

COUNT_TRANSACTIONS_CHECKED: 0

COUNT_CONFLICTS_DETECTED: 0

COUNT_TRANSACTIONS_ROWS_VALIDATING: 0

TRANSACTIONS_COMMITTED_ALL_MEMBERS: aaaaaaaa-aaaa-aaaa-aaaa-aaaaaaaaaaaa:1-302666

LAST_CONFLICT_FREE_TRANSACTION:

COUNT_TRANSACTIONS_REMOTE_IN_APPLIER_QUEUE: 0

COUNT_TRANSACTIONS_REMOTE_APPLIED: 0

COUNT_TRANSACTIONS_LOCAL_PROPOSED: 0

COUNT_TRANSACTIONS_LOCAL_ROLLBACK: 0

2 rows in set (0.00 sec)

OK, our cluster is consistent! The new node joined successfully as secondary. We can proceed to add more nodes!

09

2018

InnoDB Cluster in a nutshell – Part 1

Since MySQL 5.7 we have a new player in the field, MySQL InnoDB Cluster. This is an Oracle High Availability solution that can be easily installed over MySQL to get High Availability with multi-master capabilities and automatic failover.

This solution consists in 3 components: InnoDB Group Replication, MySQL Router and MySQL Shell, you can see how these components interact in this graphic:

In this three blog post series, we will cover each of this components to get a sense of what this tool provides and how it can help with architecture decisions.

Group Replication

This is the actual High Availability solution, and a while ago I wrote a short review of it when it still was in its labs stage. It has improved a lot since then.

This solution is based on a plugin that has to be installed (not installed by default) and works on the top of built-in replication. So it relies on binary logs and relay logs to apply writes to members of the cluster.

The main concept about this new type of replication is that all members of a cluster (i.e. each node) are considered equals. This means there is no master-slave (where slaves follow master) but members that apply transactions based on a consensus algorithm. This algorithm forces all members of a cluster to commit or reject a given transaction following decisions made by each individual member.

In practical terms, this means each member of the cluster has to decide if a transaction can be committed (i.e. no conflicts) or should be rolled back but all other members follow this decision. In other words, the transaction is either committed or rolled back according to the majority of members in a consistent state.

To achieve this, there is a service that exposes a view of cluster status indicating what members form the cluster and the current status of each of them. Additionally Group Replication requires GTID and Row Based Replication (or

binlog_format=ROW

) to distribute each writeset with row changes between members. This is done via binary logs and relay logs but before each transaction is pushed to binary/relay logs it has to be acknowledged by a majority of members of the clusters, in other words through consensus. This process is synchronous, unlike legacy replication. After a transaction is replicated we have a certification process to commit the transaction, and thus making it durable.

Here appears a new concept, the certification process, which is the process that confirms if a writeset can be applied/committed (i.e. a row change can be done without conflicts) after replication of the transaction is complete.

Basically this process consists of inspecting writesets to check if there are conflicts (i.e. same row updated by concurrent transactions). Based on an order set in the writeset, the conflict is resolved by ‘first-commiter wins’ while the second is rolled back in the originator. Finally, the transaction is pushed to binary/relay logs and committed.

Solution features

Some other features provided by this solution are:

- Single-primary or multi-primary modes meaning that the cluster can operate with a single writer and multiple readers (recommended and default setup); or with multiple writers where all nodes are capable to accept write transactions. The latter is at the cost of a performance penalty due to conflict resolution.

- Automatic failure detection, where an internal mechanism is able to detect a failed node (i.e. a crash, network problems, etc) and decide to exclude it from the cluster automatically. Also if a member can’t communicate with the cluster and gets isolated, it can’t accept transactions. This ensures that cluster data is not impacted by this situation.

- Fault tolerance. This is the strategy that the cluster uses to support failing members. As described above, this is based on a majority. A cluster needs at least three members to support one node failure because the other two members will keep the majority. The bigger the number of nodes, the bigger the number of failing nodes the cluster supports. The maximum number of members (nodes) in a cluster is currently limited to 7. If it has seven members, then the majority is kept by four or more active members. In other words, a cluster of seven would support up to three failing nodes.

We will not cover installation and configuration aspects now. This will probably come with a new series of blogs where we can cover not only deployment but also use cases and so on.

In the next post we will talk about the next cluster component: MySQL Router, so stay tuned.

The post InnoDB Cluster in a nutshell – Part 1 appeared first on Percona Database Performance Blog.

25

2017

Percona Live Europe: Tutorials Day

Welcome to the first day of the Percona Live Open Source Database Conference Europe 2017: Tutorials day! Technically the first day of the conference, this day focused on provided hands-on tutorials for people interested in learning directly how to use open source tools and technologies.

Welcome to the first day of the Percona Live Open Source Database Conference Europe 2017: Tutorials day! Technically the first day of the conference, this day focused on provided hands-on tutorials for people interested in learning directly how to use open source tools and technologies.

Today attendees went to training sessions taught by open source database experts and got first-hand experience configuring, working with, and experimenting with various open source technologies and software.

The first full day (which includes opening keynote speakers and breakout sessions) starts Tuesday 9/26 at 9:15 am.

Some of the tutorial topics covered today were:

Monitoring MySQL Performance with Percona Monitoring and Management (PMM)

Monitoring MySQL Performance with Percona Monitoring and Management (PMM)

Michael Coburn, Percona

This was a hands-on tutorial covering how to set up monitoring for MySQL database servers using the Percona Monitoring and Management (PMM) platform. PMM is an open-source collection of tools for managing and monitoring MySQL and MongoDB performance. It provides thorough time-based analysis for database servers to ensure that they work as efficiently as possible.

We learned about:

- The best practices on MySQL monitoring

- Metrics and time series

- Data collection, management and visualization tools

- Monitoring deployment

- How to use graphs to spot performance issues

- Query analytics

- Alerts

- Trending and capacity planning

- How to monitor HA

Rene Cannao, ProxySQL

ProxySQL is an open source proxy for MySQL that can provide HA and high performance with no changes in the application, using several built-in features and integration with clustering software. Those were only a few of the features we learned about in this hands-on tutorial.

MongoDB: Sharded Cluster Tutorial

MongoDB: Sharded Cluster Tutorial

Jason Terpko, ObjectRocket

Antonios Giannopoulos, ObjectRocket

This tutorial guided us through the many considerations when deploying a sharded cluster. It covered the services that make up a sharded cluster, configuration recommendations for these services, shard key selection, use cases, and how data is managed within a sharded cluster. Maintaining a sharded cluster also has its challenges. We reviewed these challenges and how you can prevent them with proper design or ways to resolve them if they exist today.

InnoDB Architecture and Performance Optimization

InnoDB Architecture and Performance Optimization

Peter Zaitsev, Percona

InnoDB is the most commonly used storage engine for MySQL and Percona Server for MySQL. It is the focus of most of the storage engine development by the MySQL and Percona Server for MySQL development teams.

In this tutorial, we looked at the InnoDB architecture, including new feature developments for InnoDB in MySQL 5.7 and Percona Server for MySQL 5.7. Peter explained how to use InnoDB in a database environment to get the best application performance and provide specific advice on server configuration, schema design, application architecture and hardware choices.

Peter updated this tutorial from previous versions to cover new MySQL 5.7 and Percona Server for MySQL 5.7 InnoDB features.

Join us tomorrow for the first full day of the Percona Live Open Source Database Conference Europe 2017!

01

2017

Group Replication: the Sweet and the Sour

In this blog, we’ll look at group replication and how it deals with flow control (FC) and replication lag.

Overview

In the last few months, we had two main actors in the MySQL ecosystem: ProxySQL and Group-Replication (with the evolution to InnoDB Cluster).

While I have extensively covered the first, my last serious work on Group Replication dates back to some lab version years past.

Given that Oracle decided to declare it GA, and Percona’s decision to provide some level of Group Replication support, I decided it was time for me to take a look at it again.

We’ve seen a lot of coverage already too many Group Replication topics. There are articles about Group Replication and performance, Group Replication and basic functionalities (or lack of it like automatic node provisioning), Group Replication and ProxySQL, and so on.

But one question kept coming up over and over in my mind. If Group Replication and InnoDB Cluster have to work as an alternative to other (virtually) synchronous replication mechanisms, what changes do our customers need to consider if they want to move from one to the other?

Solutions using Galera (like Percona XtraDB Cluster) must take into account a central concept: clusters are data-centric. What matters is the data and the data state. Both must be the same on each node at any given time (commit/apply). To guarantee this, Percona XtraDB Cluster (and other solutions) use a set of data validation and Flow Control processes that work to the ensure a consistent cluster data set on each node.

The upshot of this principle is that an application can query ANY node in a Percona XtraDB Cluster and get the same data, or write to ANY node and know that the data is visible everywhere in the cluster at (virtually) the same time.

Last but not least, inconsistent nodes should be excluded and either rebuild or fixed before rejoining the cluster.

If you think about it, this is very useful. Guaranteeing consistency across nodes allows you to transparently split write/read operations, failover from one node to another with very few issues, and more.

When I conceived of this blog on Group Replication (or InnoDB Cluster), I put myself in the customer shoes. I asked myself: “Aside from all the other things we know (see above), what is the real impact of moving from Percona XtraDB Cluster to Group Replication/InnoDB Cluster for my application? Since Group Replication still (basically) uses replication with binlogs and relaylog, is there also a Flow Control mechanism?” An alarm bell started to ring in my mind.

My answer is: “Let’s do a proof of concept (PoC), and see what is really going on.”

The POC

I setup a simple set of servers using Group Replication with a very basic application performing writes on a single writer node, and (eventually) reads on the other nodes.

You can find the schema definition here. Mainly I used the four tables from my windmills test suite — nothing special or specifically designed for Group Replication. I’ve used this test a lot for Percona XtraDB Cluster in the past, so was a perfect fit.

Test Definition

The application will do very simple work, and I wanted to test four main cases:

- One thread performing one insert at each transaction

- One thread performing 50 batched inserts at each transaction

- Eight threads performing one insert to each transaction

- Eight threads performing 50 batched inserts at each transaction

As you can see, a pretty simple set of operations. Then I decided to test it using the following four conditions on the servers:

- One slave worker FC as default

- One slave worker FC set to 25

- Eight slave workers FC as default

- Eight slave workers FC set to 25

Again nothing weird or strange from my point of view. I used four nodes:

- Gr1 Writer

- Gr2 Reader

- Gr3 Reader minimal latency (~10ms)

- Gr4 Reader minimal latency (~10ms)

Finally, I had to be sure I measured the lag in a way that allowed me to reference it consistently on all nodes.

I think we can safely say that the incoming GTID (last_ Received_transaction_set from replication_connection_status) is definitely the last change applied to the master that the slave node knows about. More recent changes could have occurred, but network delay can prevent them from being “received.” The other point of reference is GTID_EXECUTED, which refers to the latest GTID processed on the node itself.

The closest query that can track the distance will be:

select @last_exec:=SUBSTRING_INDEX(SUBSTRING_INDEX(SUBSTRING_INDEX( @@global.GTID_EXECUTED,':',-2),':',1),'-',-1) last_executed;select @last_rec:=SUBSTRING_INDEX(SUBSTRING_INDEX(SUBSTRING_INDEX( Received_transaction_set,':',-2),':',1),'-',-1) last_received FROM performance_schema.replication_connection_status WHERE Channel_name = 'group_replication_applier'; select (@last_rec - @last_exec) as real_lag

Or in the case of a single worker:

select @last_exec:=SUBSTRING_INDEX(SUBSTRING_INDEX( @@global.GTID_EXECUTED,':',-1),'-',-1) last_executed;select @last_rec:=SUBSTRING_INDEX(SUBSTRING_INDEX(Received_transaction_set,':',-1),'-',-1) last_received FROM performance_schema.replication_connection_status WHERE Channel_name = 'group_replication_applier'; select (@last_rec - @last_exec) as real_lag;

The result will be something like this:

+---------------+ | last_executed | +---------------+ | 23607 | +---------------+ +---------------+ | last_received | +---------------+ | 23607 | +---------------+ +----------+ | real_lag | +----------+ | 0 | +----------+

The whole set of tests can be found here, with all the commands you need to run the application (you can find it here) and replicate the tests. I will focus on the results (otherwise this blog post would be far too long), but I invite you to see the details.

The Results

Efficiency on Writer by Execution Time and Rows/Sec

Using the raw data from the tests (Excel spreadsheet available here), I was interested in identifying if and how the Writer is affected by the use of Group Replication and flow control.

Reviewing the graph, we can see that the Writer has a linear increase in the execution time (when using default flow control) that matches the increase in the load. Nothing there is concerning, and all-in-all we see what is expected if the load is light. The volume of rows at the end justifies the execution time.

It’s a different scenario if we use flow control. The execution time increases significantly in both cases (single worker/multiple workers). In the worst case (eight threads, 50 inserts batch) it becomes four times higher than the same load without flow control.

What happens to the inserted rows? In the application, I traced the rows inserted/sec. It is easy to see what is going on there:

We can see that the Writer with flow control activated inserts less than a third of the rows it processes without flow control.

We can definitely say that flow control has a significant impact on the Writer performance. To clarify, let’s look at this graph:

Without flow control, the Writer processes a high volume of rows in a limited amount of time (results from the test of eight workers, eight threads, 50 insert batch). With flow control, the situation changes drastically. The Writer takes a long time processing a significantly smaller number of rows/sec. In short, performance drops significantly.

But hey, I’m OK with that if it means having a consistent data-set cross all nodes. In the end, Percona XtraDB Cluster and similar solutions pay a significant performance price match the data-centric principle.

Let’s see what happen on the other nodes.

Entries Lag

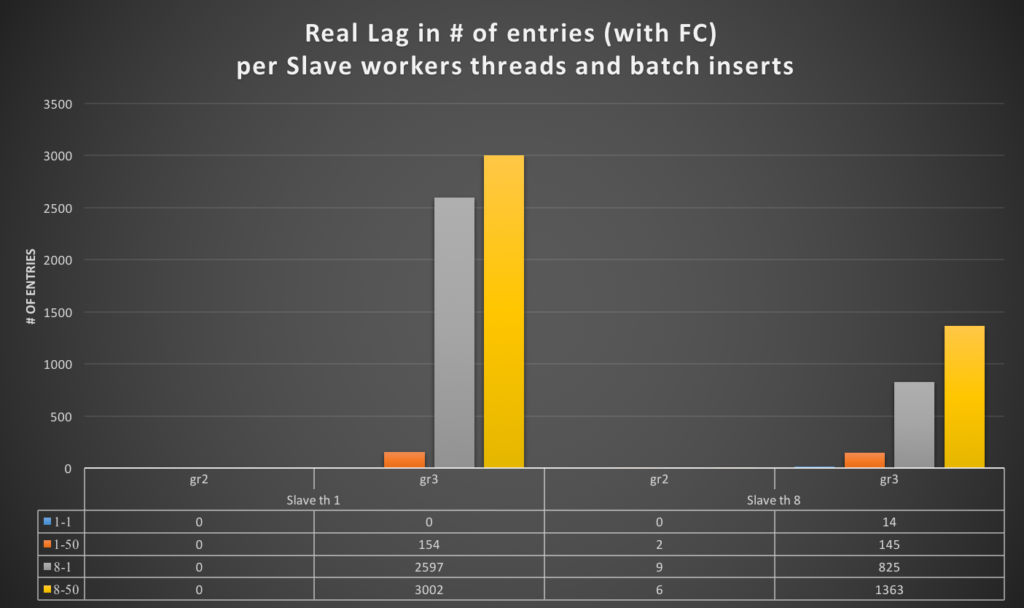

Well, this scenario is not so good:

When NOT using flow control, the nodes lag behind the writer significantly. Remember that by default flow control in Group Replication is set to 25000 entries (I mean 25K of entries!!!).

What happens is that as soon as I put some salt (see load) on the Writer, the slave nodes start to lag. When using the default single worker, that will have a significant impact. While using multiple workers, we see that the lag happens mainly on the node(s) with minimal (10ms) network latency. The sad thing is that is not really going down with respect to the single thread worker, indicating that the simple minimal latency of 10ms is enough to affect replication.

Time to activate the flow control and have no lag:

Unfortunately, this is not the case. As we can see, the lag of single worker remains high for Gr2 (154 entries). While using multiple workers, the Gr3/4 nodes can perform much better, with significantly less lag (but still high at ~1k entries).

It is important to remember that at this time the Writer is processing one-third or less of the rows it is normally able to. It is also important to note that I set 25 to the entry limit in flow control, and the Gr3 (and Gr4) nodes are still lagging more than 1K entries behind.

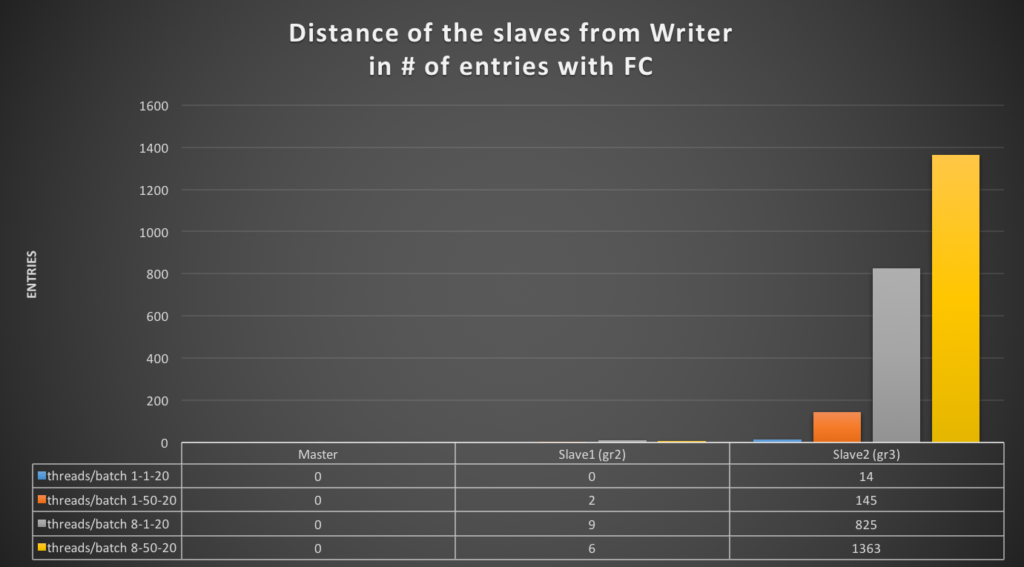

To clarify, let check the two graphs below:

Using the Writer (Master) as a baseline in entry #N, without flow control, the nodes (slaves) using Group Replication start to significantly lag behind the writer (even with a light load).

The distance in this PoC ranged from very minimal (with 58 entries), up to much higher loads (3849 entries):

Using flow control, the Writer (Master) diverges less, as expected. If it has a significant drop in performance (one-third or less), the nodes still lag. The worst-case is up to 1363 entries.

I need to underline here that we have no further way (that I am aware of, anyway) to tune the lag and prevent it from happening.

This means an application cannot transparently split writes/reads and expect consistency. The gap is too high.

A Graph That Tells Us a Story

I used Percona Monitoring and Management (PMM) to keep an eye on the nodes while doing the tests. One of the graphs really showed me that Group Replication still has some “limits” as the replication mechanism for a cluster:

This graph shows the MySQL queries executed on all the four nodes, in the testing using 8-50 threads-batch and flow control.

As you can see, the Gr1 (Writer) is the first one to take off, followed by Gr2. Nodes Gr3 and Gr4 require a bit more, given the binlog transmission (and 10ms delay). Once the data is there, they match (inconsistently) the Gr2 node. This is an effect of flow control asking the Master to slow down. But as previously seen, the nodes will never match the Writer. When the load test is over, the nodes continue to process the queue for additional ~130 seconds. Considering that the whole load takes 420 seconds on the Writer, this means that one-third of the total time on the Writer is spent syncing the slave AFTERWARDS.

The above graph shows the same test without flow control. It is interesting to see how the Writer moved above 300 queries/sec, while G2 stayed around 200 and Gr3/4 far below. The Writer was able to process the whole load in ~120 seconds instead 420, while Gr3/4 continue to process the load for an additional ~360 seconds.

This means that without flow control set, the nodes lag around 360 seconds behind the Master. With flow control set to 25, they lag 130 seconds.

This is a significant gap.

Conclusions

Going back to the reason why I was started this PoC, it looks like my application(s) are not a good fit for Group Replication given that I have set Percona XtraDB Cluster to scale out the reads and efficiently move my writer to another when I need to.

Group Replication is still based on asynchronous replication (as my colleague Kenny said). It makes sense in many other cases, but it doesn’t compare to solutions based on virtually synchronous replication. It still requires a lot of refinement.

On the other hand, for applications that can afford to have a significant gap between writers and readers it is probably fine. But … doesn’t standard replication already cover that?

Reviewing the Oracle documentations (https://dev.mysql.com/doc/refman/5.7/en/group-replication-background.html), I can see why Group Replication as part of the InnoDB cluster could help improve high availability when compared to standard replication.

But I also think it is important to understand that Group Replication (and derived solutions like InnoDB cluster) are not comparable or a replacement for data-centric solutions as Percona XtraDB Cluster. At least up to now.

Good MySQL to everyone.